King, E. (2017). Supporting gestures: Breathing in piano performance. In Music and Gesture (pp. 142–164). Routledge.

Zaccaro, A., Piarulli, A., Laurino, M., Garbella, E., Menicucci, D., Neri, B., & Gemignani, A. (2018). How breath-control can change your life: A systematic review on psycho-physiological correlates of slow breathing. Frontiers in Human Neuroscience, 12, 409421.

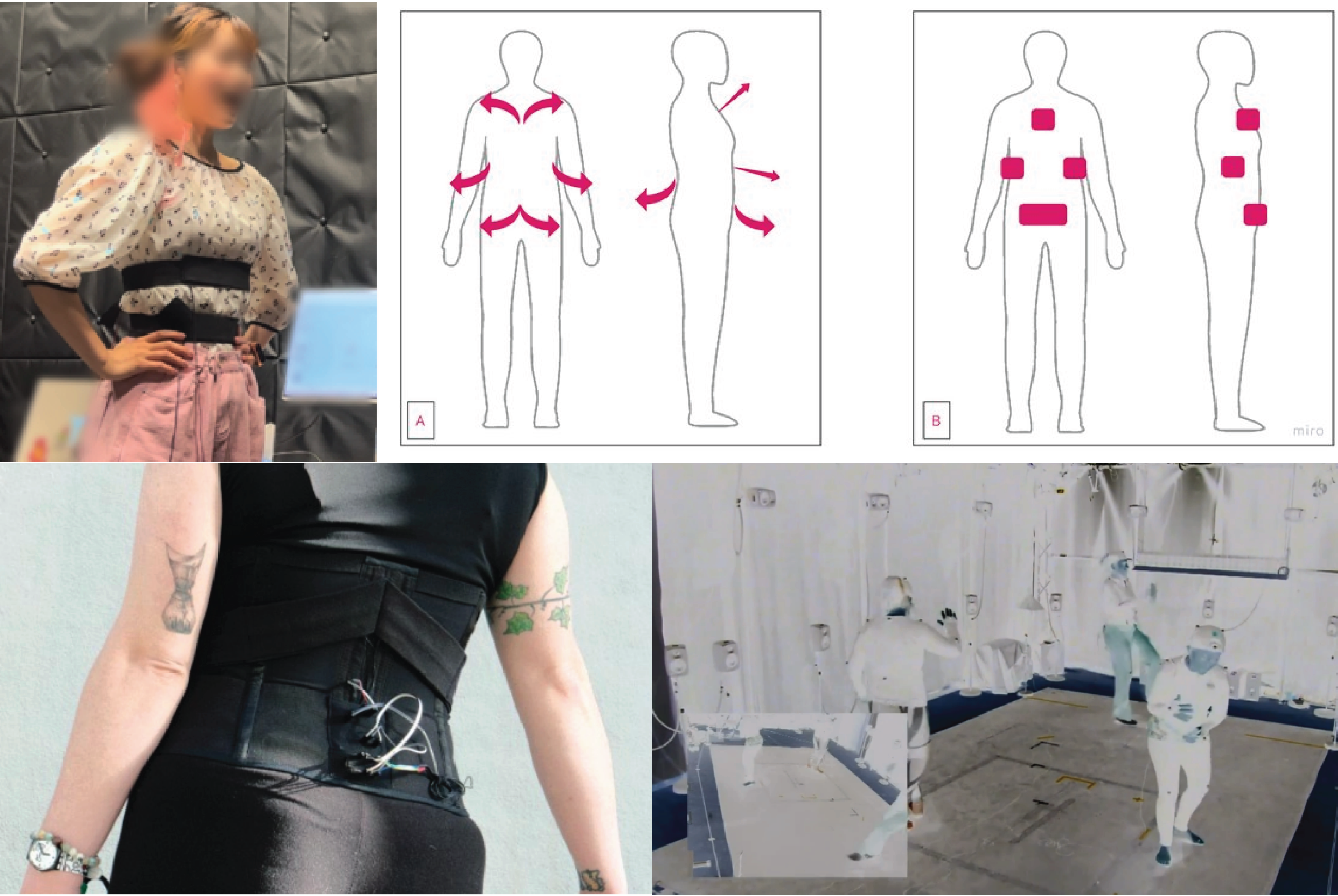

Burr, L. A., Šula, J., Mayrhauser, J., & Meschtscherjakov, A. (2023). BREATHTURES: A first step towards breathing gestures as distinct input modality. CHI Conference on Human Factors in Computing Systems, 1–6.

Scavone, G. P. (2003). The PIPE: Explorations with breath control. Proceedings of the International Conference on New Interfaces for Musical Expression, 15–18.

Nagashima, Y. (2003). Bio-sensing systems and bio-feedback systems for interactive media arts. Proceedings of the International Conference on New Interfaces for Musical Expression, 48–53.

Siwiak, D., Berger, J., & Yang, Y. (2009). Catch Your Breath: Musical biofeedback for breathing regulation. Proceedings of the International Conference on New Interfaces for Musical Expression.

Lee, J.-S., & Yeo, W. S. (2012). Real-time modification of music with dancer's respiration pattern. Proceedings of the International Conference on New Interfaces for Musical Expression.

Bhandari, R., Parnandi, A., Shipp, E., Ahmed, B., & Gutierrez-Osuna, R. (2015). Music-based respiratory biofeedback in visually-demanding tasks. Proceedings of the International Conference on New Interfaces for Musical Expression, 78–82.

Cotton, K., Sanches, P., Tsaknaki, V., & Karpashevich, P. (2021). The Body Electric: A NIME designed through and with the somatic experience of singing. Proceedings of the International Conference on New Interfaces for Musical Expression.

Piao, Z., & Xia, G. (2022). Sensing the breath: A multimodal singing tutoring interface with breath guidance. Proceedings of the International Conference on New Interfaces for Musical Expression.

Diaz, X. A., Sanchez, V. E. G., & Erdem, C. (2019). INTIMAL: Walking to find place, breathing to feel presence. Proceedings of the International Conference on New Interfaces for Musical Expression, 246–249.

Chen, K., Chang, Z., Zou, Q., & Kunze, K. (2025). Exploring Singing Breath: Physiological insights and directions for breath-aware augmentation in mixed reality design. Companion of the 2025 ACM International Joint Conference on Pervasive and Ubiquitous Computing, 702–706.

Chen, K., Panskus, R., Wu, E., Peng, Y., Saito, D., Kamiyama, E., Li, R., Liao, C.-C., Marky, K., Kato, A., Koike, H., & Kunze, K. (2026). Sensing Your Vocals: Exploring the activity of vocal cord muscles for pitch assessment using electromyography and ultrasonography. CHI Conference on Human Factors in Computing Systems.